The Latest in Literacy: 4/18/26

Sloppy education research, state training that works, weak curriculum getting overlooked, Nordic countries bin devices. Is this the spiciest edition yet?

By any reasonable estimation, Ed Tech Backlash was the story of the week. It continues to reverberate everywhere.

You probably know that, so I’ll lead with the literacy and learning stories you might’ve missed.

If you’re in the northeast, please consider coming to NYC for the ResearchEd conference in two weeks. It’s a really special event with a stellar speaker list (more below). I’ll be presenting on the dearth of research in the curriculum realm.

Viral For Good Reason

Folks are fired up about Kelsey Piper’s Education research is weak and sloppy. Why?

Hot takes in the footnotes1…

Research Roundup

To combat the research void, let’s lead with some research reflections:

A study found that Tennessee’s hand’s-on Reading360 training improved student outcomes. Go Vols! I called for this training to become the national model.

While we’re on the subject of state training, don’t miss the study on the weak efficacy of LETRS training, which states continue to embrace like lemmings.

Jared Cooney Horvath detailed the iReady research void.

Horvath also collected a number of studies to prove that Digital Tests Don’t Require Digital Classrooms.

Freddy Hiebert, Kristen Conradi Smith, and colleagues published an important study on reading intervention. It’s highly relevant to the conversation about iReady, IMHO.

Curriculum Matters <she screams into the void>

A pair of popular articles come with a caution: we need to focus a lot more on curriculum quality.

First, an article on SFUSD’s literacy turnaround reported that a third of teachers aren’t using the district’s new curriculum. The reporter interviewed Mississippi accountability hawk Rachel Canter, who expressed outrage about the lack of accountability for curriculum use.

But friends, SFUSD adopted Into Reading, the bloated curriculum with no books in chapter book grades. It also falls short on knowledge-building2. I’d be shoving it aside, too, if I taught in San Francisco. Also, SFUSD selected Creative Curriculum for PreK, an unstructured curriculum notoriously weak on early reading and math. None of this is good. None of this is mentioned in the article.

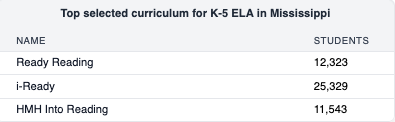

While we’re talking about curriculum quality, have I mentioned that Into Reading is one of the top 3 curricula used in Mississippi, and iReady is #1?? I did mention it, actually3. Someone should hold Mississippi accountable for letting its curriculum shortcomings fester.

On the positive side: Meriden, CT was featured for punching above its weight in literacy. Yeah! The article speaks of a smooth curriculum adoption… but won’t tell us which curriculum. Sigh. By scouring the CT state database and school board docs, it seems Meriden uses Fundations with Geodes, Heggerty, and Core Knowledge for comprehension. (I think.)

Journalist friends, if I want to know this detail, so do other readers. Can we puh-lease include these nuances?

Learning on Learning

Michele Caracappa unpacks some gut-punch research on intervention, finding “students grew more when they received no additional support whatsoever compared to students placed in the most intense Tier II or III intervention.” Coherence matters, y’all.

Kristen McQuillan’s ode to Dolly Parton and the power of practice asks an essential question, “What should students be practicing, over and over again?

Literacy Zeitgeist

The best response to the “over-teaching phonics” discourse came from Kata Solow, with notes on “right-sizing phonics instruction” and ten other thoughts you should read.

The one that made me verklempt: It’s Not Too Late: Teaching a High School Student to Read From the Ground Up by Faith Howard.

Miah Daughtery captures the civilizational risk lurking in passage popcorn curriculum: In Defense of the Humble Novel.

Doug Lemov resurfaced an excellent teacher video showcasing the FASE reading approach.

The viral video of the week was Jared Cooney Horvath, outing the College Board for watering down SAT expectations in ELA.

Ed Tech Backlash Watch

The backlash groweth.

Here’s what led the “News” segment of Twitter this week:

Both articles were must-reads.

You simply must see this viral article from The Times in headline format. Whew.

The BBC gave us Back to books - Sweden’s schools cutting back on digital learning.

Two hundred parents in affluent Lower Merion, PA signed a petition to opt out of devices. The district says they can’t. I predict this “standoff” will recur nationally.

Meghna Chakrabarti’s On Point radio show gave us a roundup: The teachers pushing back on screens in schools.

EduChatter

The federal DOE released a list of priorities—essentially, signals about what they want to fund. Literacy made the list.

Eight states are projected to have double-digit enrollment declines in the next five years, according to a Bellwether report.

Emily Oster challenges the paper claiming smartphones caused the global learning decline. Her take: it’s the pandemic.

What Happens in San Diego

The annual ASU+GSV conference—where Ed Tech goes to get funded—was a more subdued affair this year.

It was still a whirlwind, best-characterized as a rave by Andy Rotherham, who reminded us that actual learning gets short-shrift in these spaces: “The education field, ironically given what we’re supposed to be doing, absolutely loves substitutes for content and content-rich learning. It’s like a drug around here.” Amen. Read the whole thing.

Frankly, I was pleased that a Southern Surge panel made the ASU+GSV agenda, and thrilled to moderate the conversation. John White made some excellent points about our national capacity to scale the work.

Meme of the Week

Thank you to Vince Boley for reviving this gem:

Coming Attractions

April 30: Tim Odegard and Megan Gierka present Rome Wasn’t Built on a Scope & Sequence.

May 2nd: Join me at ResearchEd NYC! It’s a really special, affordable event with an outstanding speaker list: Zaretta Hammond, Natalie Wexler, Kristen McQuillan, Jim Heal, Zach Groshell, Steve Chiger, Gene Tavernatti, +++.

June 30th: the Teaching That Succeeds symposium The organizers welcome speaker proposals.

September 26: ResearchEd St. Louis. Apply to speak by 5/15.

Beyond the EduSphere

“Two weeks without mobile internet improved mental health more than antidepressants and reversed roughly 10 years of attentional decline.” Get offline and get happier, y’all!

Education has a research problem. But there is some good research in education (and I can see education researchers hustling to write pieces to say so, almost as quickly as Mike Petrilli shared caveats in Twitter). Still, quality research doesn’t carry the day in K-12, and I welcome Kelsey’s spotlight on the problems.

My own sense: education has less of a research problem than a Culture of Educational Preferences problem. The Educational Preference Problem is the reason crummy studies like Boaler’s get little push-back in the first place. Much of the field is chasing confirmation bias for the way they want instruction to look (or the flawed product they want to sell to schools).

This issue isn’t exclusive to Jo Boaler and Balanced Literacy authors, either. In the Science of Reading era, some not-so-sciencey practices have been promoted, too. The same people who shriek “Follow the Science!!” sometimes follow their BFFs into promoting unproven theories like oral-only phonemic awareness. In fact, the tendency to follow fads over proven practices is one factor in the current dustup about some schools "over-teaching" phonics (which is largely about over-complicating phonics).

What can we do about it?

I have been jumping up and down in social media, trying to get people to rally behind greater transparency about the products used in schools, specifically because it would enable better research. We’re overdue to have a National Curriculum Database.

I also like the idea of a National Reading Panel reprise, as an opportunity to refocus the field on the balance of research findings, this time with more implementation science (like a focus on dosage).

Former Louisiana Commissioner John White also cheered the idea of a new NRP during our ASU+GSV panel this week. I know I’m not alone!

Those are my ideas for tackling the Culture of Educational Preferences.

Here’s my favorite part of Kelsey’s thinking:

Over the last two decades, it has become clear that social science work from the second half of the 20th century often does not hold up at all. The statistical methods used simply weren’t good enough to support the conclusions reached. P-hacking — or running tons of analyses until you find one that is interesting to report on — was widespread. The rate of outright fraud was much higher than many had assumed.

In response, many disciplines changed how they practiced science. They started requiring scientists to share their code and data, which makes it much more difficult to commit fraud or to introduce honest errors into the literature. They started encouraging, and in some cases requiring, “preregistration” — announcing the analysis you plan to do before you do it, to cut down on p-hacking. Various efforts got underway to reward replication and combat publication bias (the tendency to not share results if they’re not interesting), and people developed new statistical toolsets to detect publication bias and understand the overall state of the literature.

The field of education did none of this. More than half of well-regarded psychology journals have adopted transparency and reporting requirements. Virtually all well-regarded economics journals recommend or require full sharing of the data and code that went into a paper. In education, only a small minority of journals even recommend code and data sharing, and none require it.

The fact that it’s normal to report on a school “confidentially,” without naming it, makes journals reluctant to require data sharing, and researchers almost never want to go to the extra effort to share their work if it’s not required or strongly encouraged.

It’s easy to say as an outsider to the field, but I think the idea of reporting on a school’s results “confidentially” just needs to go. Individual student results should be confidential, of course, but key information about school performance should be, and already is, public.

The norm that you can conceal at which school you conducted an intervention makes life easier for people who are committing fraud: no one can easily catch the fraud by calling up the school to ask if the data is accurate, or look at how it compares to other, publicly available data about the performance of the same students.

But the problems go much deeper than that. Studies that are comprehensive and well-designed enough to find meaningful results are generally large and expensive. If every researcher is trying to prove the merits of their own quixotic curriculum by convincing one school at a time to enroll in a pilot and try it, we’ll get what we currently have, which is a huge number of fairly low-quality studies of individual one-off interventions — none of which constitute convincing evidence because they simply don’t have enough statistical power.

Instead, it would be much better to have fewer, much higher-quality studies which look at the rollout of a new policy across a district or across many districts, conducted and analyzed according to the (much higher) standards for careful work from disciplines like economics.

But every researcher’s individual incentives run in the exact opposite direction — as long as the journals will continue publishing underpowered work.

The Knowledge Matters Campaign reviews curricula for their knowledge-building properties. The team includes literacy leaders who were core to the Common Core Standards authoring effort. Ten curricula make the cut. Into Reading isn’t one of them.

None of the basal programs make the cut, in fact.

Re-sharing this footnote from my November article on the curriculum-led reform in Louisiana and Tennessee:

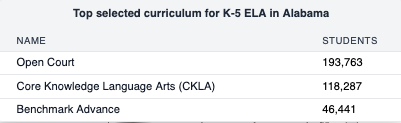

Across the country, you’ll find at least one and sometimes three lesser programs on the most-used list in every state outside LA/TN. Even Mississippi and Alabama are still working on curriculum reform. Here are the top programs in those states, noting that it’s a smallish sample in Mississippi (representing approximately 26% of Mississippi schools):

As I have written previously, these states have phased in curriculum reforms. Some believe that Mississippi’s 8th grade gains have lagged its 4th grade gains because it needs to address this gap.

Thank you for taking the time to gather this research. I try to read at least some parts each week. A couple thoughts/questions:

I watched the video of a teacher using FASE reading (I had to look up what it was) and I wonder, how is that different from round robin reading? I understand that the focus is on reading with prosody, but she is still randomly calling on students. Did they practice and hear the sections before, or is this a cold read?

I went through LETRS training and I think the missing link is that teachers don't apply what they learn. If a school/district/state made both coaching and time to adjust and create lessons using what they've learned an integral part, I think results would be way different. Most schools in my area have the teachers watch the training videos, but there is no follow-up on whether you are using it or not. You can correct me if I'm wrong, but I think that's the magic bullet in Tennessee's teacher training--immediate and relevant development of materials and ongoing support with modeling/coaching.

Loving the newsletter!